Docker for Windows installation from MSDN. Microsoft has provided two images for the new Windows Server editions: Server Core and Nano Server. Nano Server is being pitched as a minimalist OS - so minimal that it lacks a full version of PowerShell and cannot install programs using MSI files.

In this tutorial we will create a WebAPI application with the full version of ASP.NET. We will then host it with IIS in a Windows Server Core instance using Windows Containers and Docker.

If you have Windows 10 Pro or Enterprise installed on your PC or laptop then there's some great news for you. You can run Windows Nano Server and Windows Server Core without having to set up Windows Server 2016 in a virtual machine!

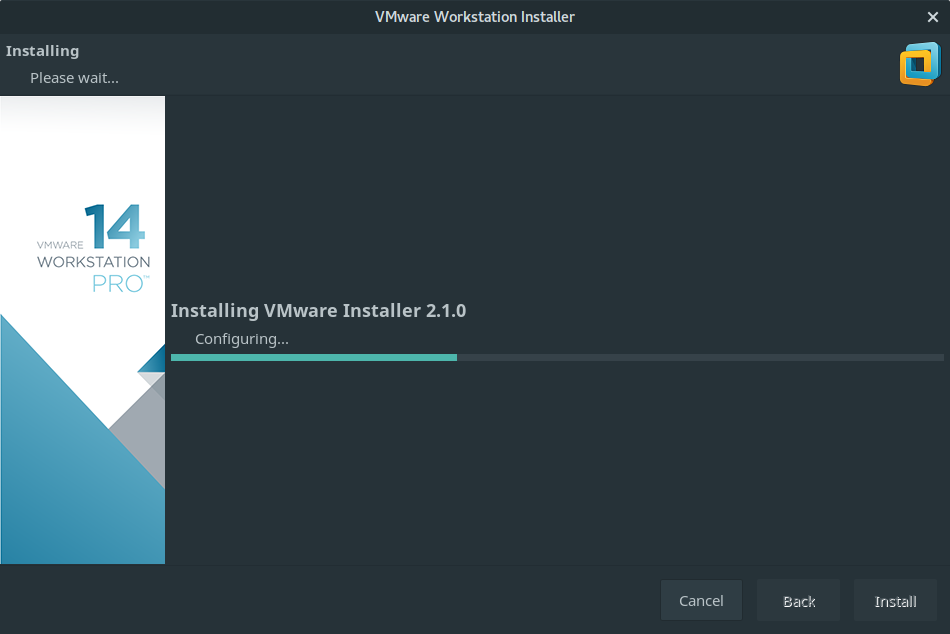

If you're following along now then you'll need Windows 10 to hand and will have already installed Docker with the instructions available here:

- As of 1.12.2 Beta 26 Docker For Windows allows you to switch between Windows and Linux containers. The install is an MSI so it appears to set up the correct permissions too. (And you don't have to go though all the manual steps to get Windows containers working!) I'm using 1.12.2 Beta 28 on Win 10.

- In this post I will demonstrate how to update the Windows Subsystem for Linux - WSL to version 2 aka WSL2.Once updated, I will demonstrate how to configure Docker to use WSL2 to run a Linux Minecraft Java Edition container natively on Windows without emulation, i.e., without a Hyper-V VM.

Docker for Windows installation from MSDN

Microsoft has provided two images for the new Windows Server editions: Server Core and Nano Server. Nano Server is being pitched as a minimalist OS - so minimal that it lacks a full version of PowerShell and cannot install programs using MSI files.

We will pull down a Windows Server Core image as a basis for our container.

docker run

Let's start a command prompt in a docker container to check that everything worked. If it's the first time you've run this command then Docker will pull down around a 4GB download.

My system has started a Windows Server Core container and has given me a minimal filesystem. How long did this take? For me it was around 5 seconds and that's because Windows 10 uses Hyper-V isolation to launch each container.

If you want to clean up your containers then it turns out PowerShell has the same syntax as bash: docker rm -vf $(docker ps -qa)

Visual Studio 15

In the meantime install Visual Studio 15 Community edition so that we can create an ASP.NET application.

The download and installation will take some time. You can skip the next step if you want to but will still need to build the code through Visual Studio or msbuild.

Github repository alexellis/guidgenerator-aspnet

Create a WebAPI application

Create a WebAPI application, build it and save it.

Click Web and then .NET 4.5.1.

Pick Web API.

I didn't spend too long here - I just edited the ValuesController so that it will create a GUID for us. See below:

This is what I got from running the code on my own machine in debug mode (hit F5 in Visual Studio).

Next we'll create a Dockerfile for IIS and .NET and then finally run the code in a Windows Container through Docker.

.NET Dockerfile?

Let's create an outline for a .NET + IIS Dockerfile then enhance it.

There is a base image provided by Microsoft which already contains IIS, we'll use that as a template. As best practices would have use do - we'll pin ourselves to a specific tag or version of the image.

You can see all the tags on the Microsoft repo on the Docker Hub.

We'll introduce a new Dockerfile instruction called SHELL which allows us to specify which shell or command line interpreter to use for each RUN step. The SHELL value could be cmd or powershell or something completely different.

Read about the SHELL instruction in the Dockerfile reference.

To make sure we only get a single layer for .NET and ASP.NET features we will use a ; to separate each command and then a to go multi-line.

When you start to build this Docker image, you'll get useful information back from Powershell telling you what's going on:

Edit the Dockerfile

At this point we'll add the code to the container and create a new site in IIS along with an application pool. We also want to remove the default website because less is more.

If you think the CMD instruction looks out of place then you're right. We need to give the container a long-running task otherwise it will quit immediately.

Docker's Michael Friis suggested that the ping command may fill up the container logs and suggested using a wait loop instead. It would look a bit like this:

Update 6th Jan 2017: Microsoft has started to bundle ServiceMonitor.exe with the IIS container which means you no longer need the above while/sleep loop.

Create an image

Now build the image and run it:

Use -p 80:80 to expose port 80 from IIS.

Windows Docker Install Msi

Once the container is running we'll need to find its IP address. This command can help us pick it out of the metadata supplied by docker inspect.

Use the container's IP address in a web browser or with curl to generate as many Guids as you like:

Run metrics

You can even use Apache Bench to generate some metrics. If you have the Ubuntu Subsystem for Windows then install the package and run some tests:

Wrapping up

So to wrap up, we've just:

- Started the new Windows Server Core edition on Windows 10

- Used Hyper-V isolation

- Built a full WebAPI / ASP.NET application on Windows with Visual Studio 15

- Built a new container with IIS

- Ran metrics on our WebAPI application

Update 6th Jan 2017: Microsoft has started to bundle ServiceMonitor.exe with the IIS container which means you no longer need the above while/sleep loop.

The GUID generator source code is available here on my Github repository alexellis/guidgenerator-aspnet.

You can use this sample if you did not create a brand new project of your own, but make sure you build the code and carry out a NuGet restore.

Enjoyed the tutorial? 🤓💻

Follow me on Twitter @alexellisuk to keep up to date with new content. Feel free to reach out if you have any questions, comments, suggestions.

Enjoyed the tutorial? 🤓💻

Follow me on Twitter @alexellisuk to keep up to date with new content. Feel free to reach out if you have any questions, comments, suggestions.

Hire me to help you with Kubernetes / Cloud Native

Hire me via OpenFaaS Ltd by emailing sales@openfaas.com, or through my work calendar. Let me know whether you need help with Windows Containers .NET/.NET Core migration, Jenkins or something else.

See also

Elasticsearch is also available as Docker images.The images use centos:8 as the base image.

A list of all published Docker images and tags is available atwww.docker.elastic.co. The source filesare inGithub.

This package contains both free and subscription features.Start a 30-day trial to try out all of the features.

Obtaining Elasticsearch for Docker is as simple as issuing a docker pull commandagainst the Elastic Docker registry.

To start a single-node Elasticsearch cluster for development or testing, specifysingle-node discovery to bypass the bootstrap checks:

Windows Install Media Creation Tool

Starting a multi-node cluster with Docker Composeedit

To get a three-node Elasticsearch cluster up and running in Docker,you can use Docker Compose:

This sample docker-compose.yml file uses the ES_JAVA_OPTSenvironment variable to manually set the heap size to 512MB. We do not recommendusing ES_JAVA_OPTS in production. See Manually set the heap size.

This sample Docker Compose file brings up a three-node Elasticsearch cluster.Node es01 listens on localhost:9200 and es02 and es03 talk to es01 over a Docker network.

Please note that this configuration exposes port 9200 on all network interfaces, and given howDocker manipulates iptables on Linux, this means that your Elasticsearch cluster is publically accessible,potentially ignoring any firewall settings. If you don’t want to expose port 9200 and instead usea reverse proxy, replace 9200:9200 with 127.0.0.1:9200:9200 in the docker-compose.yml file.Elasticsearch will then only be accessible from the host machine itself.

The Docker named volumesdata01, data02, and data03 store the node data directories so the data persists across restarts.If they don’t already exist, docker-compose creates them when you bring up the cluster.

Make sure Docker Engine is allotted at least 4GiB of memory.In Docker Desktop, you configure resource usage on the Advanced tab in Preference (macOS)or Settings (Windows).

Docker Compose is not pre-installed with Docker on Linux.See docs.docker.com for installation instructions:Install Compose on Linux

Run

docker-composeto bring up the cluster:Submit a

_cat/nodesrequest to see that the nodes are up and running:

Log messages go to the console and are handled by the configured Docker logging driver.By default you can access logs with docker logs. If you would prefer the Elasticsearchcontainer to write logs to disk, set the ES_LOG_STYLE environment variable to file.This causes Elasticsearch to use the same logging configuration as other Elasticsearch distribution formats.

To stop the cluster, run docker-compose down.The data in the Docker volumes is preserved and loadedwhen you restart the cluster with docker-compose up.To delete the data volumes when you bring down the cluster,specify the -v option: docker-compose down -v.

See Encrypting communications in an Elasticsearch Docker Container andRun the Elastic Stack in Docker with TLS enabled.

The following requirements and recommendations apply when running Elasticsearch in Docker in production.

The vm.max_map_count kernel setting must be set to at least 262144 for production use.

How you set vm.max_map_count depends on your platform:

Linux

The

vm.max_map_countsetting should be set permanently in/etc/sysctl.conf:To apply the setting on a live system, run:

macOS with Docker for Mac

The

vm.max_map_countsetting must be set within the xhyve virtual machine:From the command line, run:

Press enter and use`sysctl` to configure

vm.max_map_count:- To exit the

screensession, typeCtrl a d.

Windows and macOS with Docker Desktop

The

vm.max_map_countsetting must be set via docker-machine:Windows with Docker Desktop WSL 2 backend

The

vm.max_map_countsetting must be set in the docker-desktop container:

Configuration files must be readable by the elasticsearch useredit

By default, Elasticsearch runs inside the container as user elasticsearch usinguid:gid 1000:0.

One exception is Openshift,which runs containers using an arbitrarily assigned user ID.Openshift presents persistent volumes with the gid set to 0, which works without any adjustments.

If you are bind-mounting a local directory or file, it must be readable by the elasticsearch user.In addition, this user must have write access to the config, data and log dirs(Elasticsearch needs write access to the config directory so that it can generate a keystore).A good strategy is to grant group access to gid 0 for the local directory.

For example, to prepare a local directory for storing data through a bind-mount:

You can also run an Elasticsearch container using both a custom UID and GID. Unless youbind-mount each of the config, data` and logs directories, you must passthe command line option --group-add 0 to docker run. This ensures that the userunder which Elasticsearch is running is also a member of the root (GID 0) group inside thecontainer.

As a last resort, you can force the container to mutate the ownership ofany bind-mounts used for the data and log dirs through theenvironment variable TAKE_FILE_OWNERSHIP. When you do this, they will be owned byuid:gid 1000:0, which provides the required read/write access to the Elasticsearch process.

Increased ulimits for nofile and nprocmust be available for the Elasticsearch containers.Verify the init systemfor the Docker daemon sets them to acceptable values.

To check the Docker daemon defaults for ulimits, run:

If needed, adjust them in the Daemon or override them per container.For example, when using docker run, set:

Swapping needs to be disabled for performance and node stability.For information about ways to do this, see Disable swapping.

If you opt for the bootstrap.memory_lock: true approach,you also need to define the memlock: true ulimit in theDocker Daemon,or explicitly set for the container as shown in the sample compose file.When using docker run, you can specify:

The image exposesTCP ports 9200 and 9300. For production clusters, randomizing thepublished ports with --publish-all is recommended,unless you are pinning one container per host.

By default, Elasticsearch automatically sizes JVM heap based on a nodes’sroles and the total memory available to the node’s container. Werecommend this default sizing for most production environments. If needed, youcan override default sizing by manually setting JVM heap size.

To manually set the heap size in production, bind mount a JVMoptions file under /usr/share/elasticsearch/config/jvm.options.d thatincludes your desired heap size settings.

For testing, you can also manually set the heap size using the ES_JAVA_OPTSenvironment variable. For example, to use 16GB, specify -eES_JAVA_OPTS='-Xms16g -Xmx16g' with docker run. The ES_JAVA_OPTS variableoverrides all other JVM options. The ES_JAVA_OPTS variable overrides all otherJVM options. We do not recommend using ES_JAVA_OPTS in production. Thedocker-compose.yml file above sets the heap size to 512MB.

Pin your deployments to a specific version of the Elasticsearch Docker image. Forexample docker.elastic.co/elasticsearch/elasticsearch:7.12.0.

You should use a volume bound on /usr/share/elasticsearch/data for the following reasons:

- The data of your Elasticsearch node won’t be lost if the container is killed

- Elasticsearch is I/O sensitive and the Docker storage driver is not ideal for fast I/O

- It allows the use of advancedDocker volume plugins

If you are using the devicemapper storage driver, do not use the default loop-lvm mode.Configure docker-engine to usedirect-lvm.

Consider centralizing your logs by using a differentlogging driver. Alsonote that the default json-file logging driver is not ideally suited forproduction use.

When you run in Docker, the Elasticsearch configuration files are loaded from/usr/share/elasticsearch/config/.

To use custom configuration files, you bind-mount the filesover the configuration files in the image.

You can set individual Elasticsearch configuration parameters using Docker environment variables.The sample compose file and thesingle-node example use this method.

To use the contents of a file to set an environment variable, suffix the environmentvariable name with _FILE. This is useful for passing secrets such as passwords to Elasticsearchwithout specifying them directly.

For example, to set the Elasticsearch bootstrap password from a file, you can bind mount thefile and set the ELASTIC_PASSWORD_FILE environment variable to the mount location.If you mount the password file to /run/secrets/bootstrapPassword.txt, specify:

You can also override the default command for the image to pass Elasticsearch configurationparameters as command line options. For example:

While bind-mounting your configuration files is usually the preferred method in production,you can also create a custom Docker imagethat contains your configuration.

Create custom config files and bind-mount them over the corresponding files in the Docker image.For example, to bind-mount custom_elasticsearch.yml with docker run, specify:

The container runs Elasticsearch as user elasticsearch usinguid:gid 1000:0. Bind mounted host directories and files must be accessible by this user,and the data and log directories must be writable by this user.

By default, Elasticsearch will auto-generate a keystore file for secure settings. Thisfile is obfuscated but not encrypted. If you want to encrypt yoursecure settings with a password, you must use theelasticsearch-keystore utility to create a password-protected keystore andbind-mount it to the container as/usr/share/elasticsearch/config/elasticsearch.keystore. In order to providethe Docker container with the password at startup, set the Docker environmentvalue KEYSTORE_PASSWORD to the value of your password. For example, a dockerrun command might have the following options:

In some environments, it might make more sense to prepare a custom image that containsyour configuration. A Dockerfile to achieve this might be as simple as:

You could then build and run the image with:

Some plugins require additional security permissions.You must explicitly accept them either by:

- Attaching a

ttywhen you run the Docker image and allowing the permissions when prompted. - Inspecting the security permissions and accepting them (if appropriate) by adding the

--batchflag to the plugin install command.

See Plugin managementfor more information.

You now have a test Elasticsearch environment set up. Before you startserious development or go into production with Elasticsearch, you must do some additionalsetup:

- Learn how to configure Elasticsearch.

- Configure important Elasticsearch settings.

- Configure important system settings.

Most Popular